Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Immuta integrates with your data platforms so you can register your data and effectively manage access controls on that data.

This section includes guidance for connecting your data platform and keeping it synced with Immuta.

This reference guide outlines the features, policies, and audit capabilities supported by each integration.

The guides in these sections include information about how to connect your data platform to Immuta.

This reference guide outlines the actions and features that trigger Immuta queries in your remote platform that may incur cost.

Immuta integrates with your data platforms so you can register your data and effectively manage access controls on that data. This section includes concept, reference, and how-to guides for registering and managing data sources and your connections.

In this integration, Immuta generates policy-enforced views in a schema in your configured Azure Synapse Analytics Dedicated SQL pool for tables registered as Immuta data sources.

This guide outlines how to integrate Azure Synapse Analytics with Immuta.

: Configure the integration in Immuta.

: This guide describes the design and components of the integration.

: This guide describes the prerequisites, supported features, and limitations of the integration.

The how-to guides linked on this page illustrate how to integrate Azure Synapse Analytics with Immuta. See the reference guide for information about the Azure Synapse Analytics integration.

Requirement: A running Dedicated SQL pool

Connect your technology

These guides provide instructions on getting your data set up in Immuta.

: Configure an Azure Synapse Analytics integration with Immuta so that Immuta can create policy protected views for your users to query.

: This will register your data objects into Immuta and allow you to start dictating access through global policies.

: Use domains to segment your data and assign responsibilities to the appropriate team members. These domains will then be used in policies.

Register your users

These guides provide instructions on getting your users set up in Immuta.

: Bring the IAM your organization already uses and allow Immuta to register your users for you.

Add data metadata

These guides provide instructions on getting your data metadata set up in Immuta.

: Bring the external catalog your organization already uses and allow Immuta to continually sync your tags with your data sources for you.

Start using the Governance app

These guides provide instructions on using the Governance app for the first time.

: Once you add your data metadata to Immuta, you can immediately create policies that utilize your tags and apply to your tables. Subscription policies can be created to dictate access to data sources.

To migrate from the private preview version of table grants (available before September 2022) to the GA version, complete the steps below.

Navigate to the App Settings page.

Click Integration Settings in the left panel, and scroll to the Global Integrations Settings section.

Click Save. Wait for about 1 minute per 1000 users. This gives time for Immuta to drop all the previously created user roles.

Use the Enable Snowflake table grants tutorial to re-enable the feature.

Configure audit: Once you have your data sources and users, and policies granting them access, you can set up audit export. This will export the audit logs from policy changes and tagging updates.

This page provides a tutorial for enabling the Azure Synapse Analytics integration on the Immuta app settings page. To configure this integration via the Immuta API, see the Integrations API getting started guide.

For an overview of the integration, see the Azure Synapse Analytics overview documentation.

A running Dedicated SQL pool is required.

If you are using the OAuth authentication method,

Ensure that Microsoft Entra ID is on the same account as the Azure Synapse Analytics workspace and dedicated SQL pool.

.

Select Accounts in this organizational directory only as the account type.

Click the App Settings icon in the left sidebar.

Click the Integrations tab.

Click the +Add Integration button and select Azure Synapse Analytics from the dropdown menu.

You have two options for configuring your Azure Synapse Analytic environment:

: Grant Immuta one-time use of credentials to automatically configure your environment and the integration.

: Run the Immuta script in your Azure Synapse Analytics environment yourself to configure the integration.

Enter the username and password in the Privileged User Credentials section.

Select Manual.

Download, fill out the appropriate fields, and run the bootstrap master script and bootstrap script linked in the Setup section. Note: The master script is not required if you're using the OAuth authentication method.

Select the authentication method:

Click Save.

.

Click the App Settings icon in the left sidebar.

Navigate to the Integrations tab and click the down arrow next to the Azure Synapse Analytics Integration.

Edit the field you want to change. Note any field shadowed is not editable, and the integration must be disabled and re-installed to change it.

Immuta requires temporary, one-time use of credentials with specific permissions.

When performing edits to an integration, Immuta requires temporary, one-time use of credentials of a Superuser or a user with the Manage GRANTS permission

Alternatively, you can download the Edit Script from your Azure Synapse Analytics configuration on the Immuta app settings page and run it in Azure Synapse Analytics.

Click the App Settings icon in the left sidebar.

Navigate to the Integrations tab and click the down arrow next to the Azure Synapse Analytics Integration.

Click the checkbox to disable the integration.

This integration enforces policies on Databricks securables registered in the legacy Hive metastore. Once these securables are registered as Immuta data sources, users can query policy-enforced data on Databricks clusters.

The guides in this section outline how to integrate Databricks Spark with Immuta.

This getting started guide outlines how to integrate Databricks with Immuta.

: Manually update your cluster to reflect changes in the Immuta init script or cluster policies.

: Register a Databricks library with Immuta as a trusted library to avoid Immuta security manager errors when using third-party libraries.

: This guide describes the design and components of the integration.

: This guide provides an overview of the Immuta features that provide security for your users and Databricks clusters and that allow you to prove compliance and monitor for anomalies.

: This guide provides an overview of registering Databricks securables and protecting them with Immuta policies.

If a Databricks cluster needs to be manually updated to reflect changes in the Immuta init script or cluster policies, you can remove and set up your integration again to get the updated policies and init script.

Log in to Immuta as an Application Admin.

Click the App Settings icon in the navigation menu and scroll to the Integration Settings section.

Your existing Databricks Spark integration should be listed here; expand it and note the configuration values. Now select Remove to remove your integration.

Click Add Integration and select Databricks Integration to add a new integration.

Enter your Databricks Spark integration settings again as configured previously.

Click Add Integration to add the integration, and then select Configure Cluster Policies to set up the updated cluster policies and init script.

Select the cluster policies you wish to use for your Immuta-enabled Databricks clusters.

Automatically push cluster policies and the init script (recommended) or manually update your cluster policies.

Automatically push cluster policies

Select Automatically Push Cluster Policies and enter your privileged Databricks access token. This token must have privileges to write to cluster policies.

Restart any Databricks clusters using these updated policies for the changes to take effect.

Databricks Libraries API: Installing trusted libraries outside of the Databricks Libraries API (e.g., ADD JAR ...) is not supported.

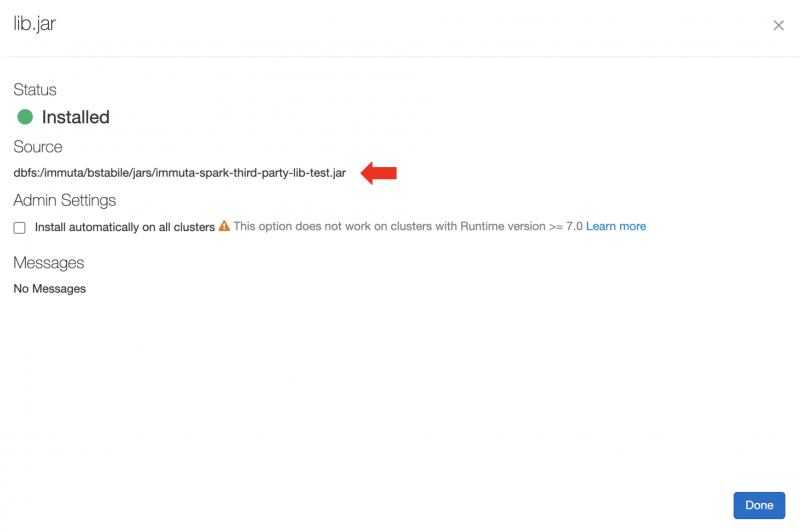

In the Databricks Clusters UI, install your third-party library .jar or Maven artifact with Library Source Upload, DBFS, DBFS/S3, or Maven. Alternatively, use the Databricks libraries API.

In the Databricks Clusters UI, add the IMMUTA_SPARK_DATABRICKS_TRUSTED_LIB_URIS property as a Spark environment variable and set it to your artifact's URI. To specify more than one trusted library, comma delimit the URIs:

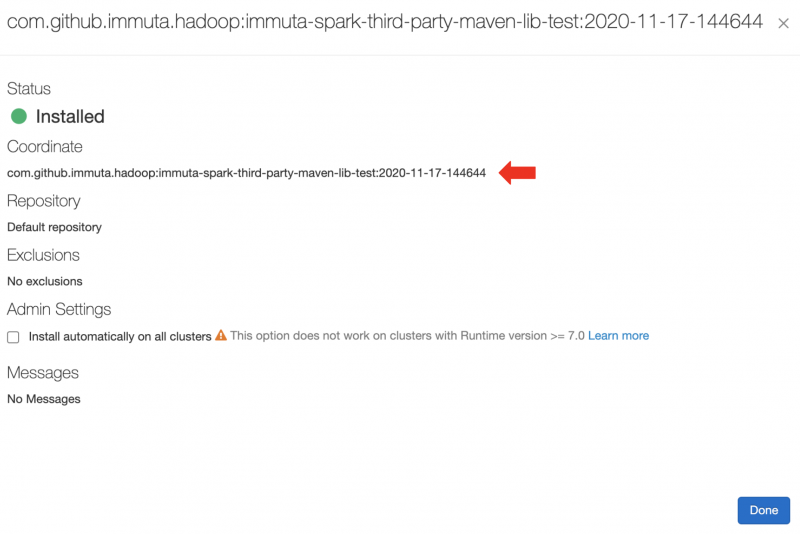

For Maven artifacts, the URI is maven:/<maven_coordinates>, where <maven_coordinates> is the Coordinates field found when clicking on the installed artifact on the Libraries tab in the Databricks Clusters UI. Here's an example of an installed artifact:

In this example, you would add the following Spark environment variable:

For jar artifacts, the URI is the Source field found when clicking on the installed artifact on the Libraries tab in the Databricks Clusters UI. For artifacts installed from DBFS or S3, this ends up being the original URI to your artifact. For uploaded artifacts, Databricks will rename your .jar and put it in a directory in DBFS. Here's an example of an installed artifact:

Restart the cluster.

Once the cluster is up, execute a command in a notebook. If the trusted library installation is successful, you should see driver log messages like this:

This page outlines the configuration for setting up project UDFs, which allow users to set their current project in Immuta through Spark. For details about the specific functions available and how to use them, see the Use Project UDFs (Databricks) page.

Use project UDFs in Databricks Spark

Currently, caches are not all invalidated outside of Databricks because Immuta caches information pertaining to a user's current project. Consequently, this feature should only be used in Databricks.

Lower the web service cache timeout in Immuta:

Click the App Settings icon and scroll to the HDFS Cache Settings section.

Lower the Cache TTL of HDFS user names (ms) to 0.

Raise the cache timeout on your Databricks cluster: In the Spark environment variables section, set the IMMUTA_CURRENT_PROJECT_CACHE_TIMEOUT_SECONDS and IMMUTA_PROJECT_CACHE_TIMEOUT_SECONDS to high values (like 10000).

Note: These caches will be invalidated on cluster when a user calls immuta.set_current_project, so they can effectively be cached permanently on cluster to avoid periodically reaching out to the web service.

This page provides guidelines for troubleshooting issues with the Databricks Spark integration and resolving Py4J security and Databricks trusted library errors.

For easier debugging of the Databricks Spark integration, follow the recommendations below.

Enable cluster init script logging:

In the cluster page in Databricks for the target cluster, navigate to Advanced Options -> Logging.

Change the Destination from NONE to DBFS and change the path to the desired output location. Note: The unique cluster ID will be added onto the end of the provided path.

View the Spark UI on your target Databricks cluster: On the cluster page, click the Spark UI tab, which shows the Spark application UI for the cluster. If you encounter issues creating Databricks data sources in Immuta, you can also view the JDBC/ODBC Server portion of the Spark UI to see the result of queries that have been sent from Immuta to Databricks.

The validation and debugging notebook is designed to be used by or under the guidance of an Immuta support professional. Reach out to your Immuta representative for assistance.

Import the notebook into a Databricks workspace by navigating to Home in your Databricks instance.

Click the arrow next to your name and select Import.

Once you have executed commands in the notebook and populated it with debugging information, export the notebook and its contents by opening the File menu, selecting Export, and then selecting DBC Archive.

Error Message: py4j.security.Py4JSecurityException: Constructor <> is not allowlisted

Explanation: This error indicates you are being blocked by Py4J security rather than the Immuta Security Manager. Py4J security is strict and generally ends up blocking many ML libraries.

Solution: Turn off Py4J security on the offending cluster by setting IMMUTA_SPARK_DATABRICKS_PY4J_STRICT_ENABLED=false

Check the driver logs for details. Some possible causes of failure include

One of the Immuta-configured trusted library URIs does not point to a Databricks library. Check that you have configured the correct URI for the Databricks library.

For trusted Maven artifacts, the URI must follow this format: maven:/group.id:artifact-id:version.

Databricks failed to install a library. Any Databricks library installation errors will appear in the Databricks UI under the Libraries tab.

The Databricks Spark integration is one of two integrations Immuta offers for Databricks.

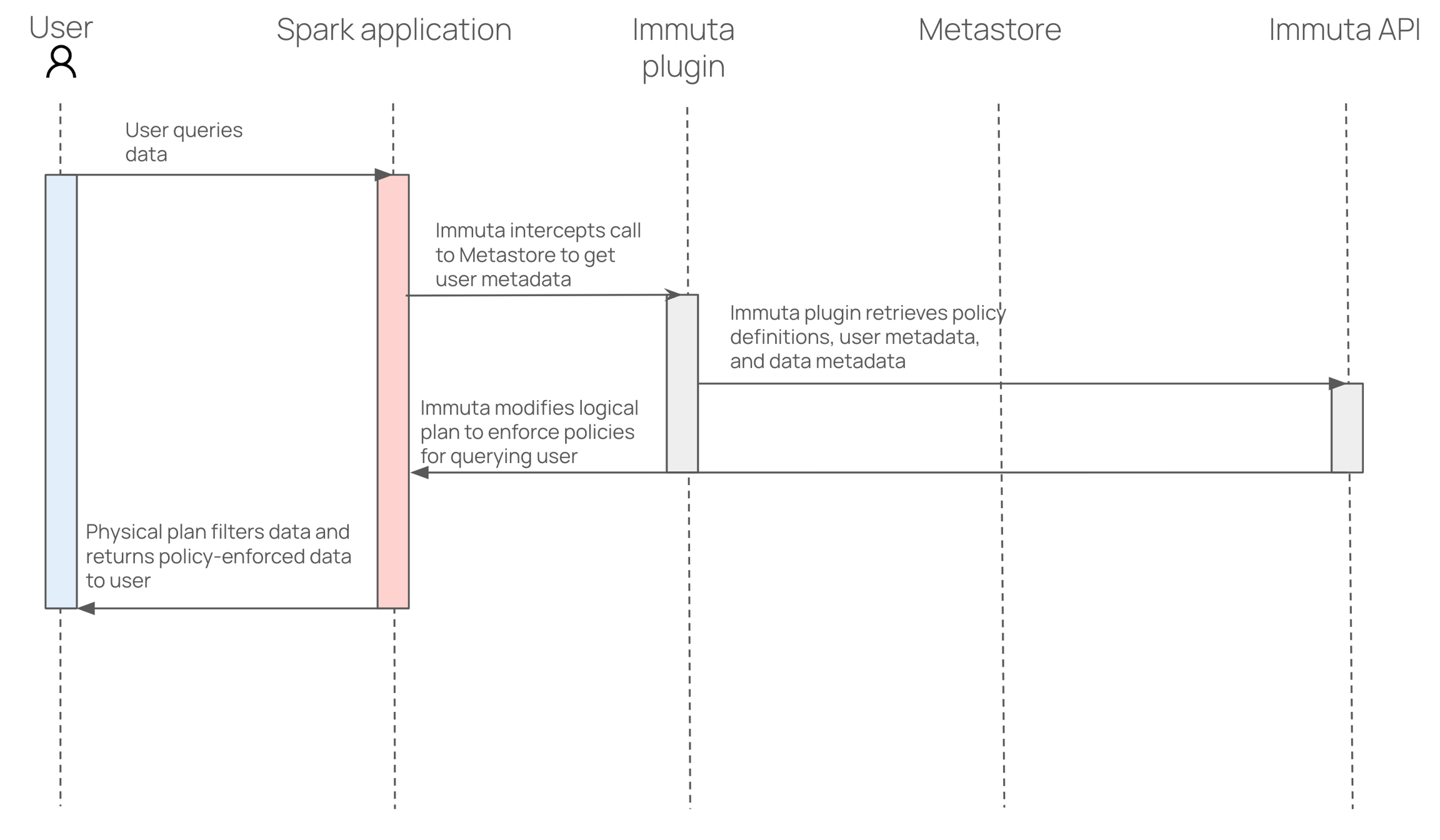

In this integration, Immuta installs an Immuta-maintained Spark plugin on your Databricks cluster. When a user queries data that has been registered in Immuta as a data source, the plugin injects policy logic into the plan Spark builds so that the results returned to the user only include data that specific user should see.

The reference guides in this section are written for Databricks administrators who are responsible for setting up the integration, securing Databricks clusters, and setting up users:

: This guide includes information about what Immuta creates in your Databricks environment and securing your Databricks clusters.

: Consult this guide for information about customizing the Databricks Spark integration settings.

: Consult this guide for information about connecting data users and setting up user impersonation.

: This guide provides a list of Spark environment variables used to configure the integration.

: This guide describes ephemeral overrides and how to configure them to reduce the risk that a user has overrides set to a cluster (or multiple clusters) that aren't currently up.

When you enable Unity Catalog, Immuta automatically migrates your existing Databricks data sources in Immuta to reference the legacy hive_metastore catalog to account for Unity Catalog's three-level hierarchy. New data sources will reference the Unity Catalog metastore you create and attach to your Databricks workspace.

Because the hive_metastore catalog is not managed by Unity Catalog, existing data sources in the hive_metastore cannot have Unity Catalog access controls applied to them. Data sources in the Hive Metastore must be managed by the Databricks Spark integration.

To allow Immuta to administer Unity Catalog access controls on that data, move the data to Unity Catalog and re-register those tables in Immuta by completing the steps below. If you don't move all data before configuring the integration, metastore magic will protect your existing data sources throughout the migration process.

Ensure that all Databricks clusters that have Immuta installed are stopped and the Immuta configuration is removed from the cluster. Immuta-specific cluster configuration is no longer needed with the Databricks Unity Catalog integration.

Move all data into Unity Catalog before configuring Immuta with Unity Catalog. Existing data sources will need to be re-created after they are moved to Unity Catalog and the Unity Catalog integration is configured.

.

Navigate to the App Settings page.

Scroll to the Global Integrations Settings section.

Opt to change the Role Prefix. Snowflake table grants creates a new Snowflake role for each Immuta user. To ensure these Snowflake role names do not collide with existing Snowflake roles, each Snowflake role created for Snowflake table grants requires a common prefix. When using multiple Immuta accounts within a single Snowflake account, the Snowflake table grants role prefix should be unique for each Immuta account. The prefix must adhere to and be less than 50 characters. Once the configuration is saved, the prefix cannot be modified; however, the Snowflake table grants feature can be disabled and re-enabled to change the prefix.

Finish configuring your integration by following one of these guidelines:

New Snowflake integration: Set up a new Snowflake integration by following the .

Existing Snowflake integration (automatic setup): You will be prompted to enter connection information for a Snowflake user. Immuta will execute the migration to Snowflake table grants using a connection established with this Snowflake user. The Snowflake user you provide here must have Snowflake privileges to run these .

Snowflake table grants private preview migration

To migrate from the private preview version of Snowflake table grants (available before September 2022) to the generally available version of Snowflake table grants, follow the steps in the .

Immuta is compatible with Snowflake Secure Data Sharing. Using both Immuta and Snowflake, organizations can share the policy-protected data of their Snowflake database with other Snowflake accounts with Immuta policies enforced in real time.

Prerequisites:

Required Permission: Immuta: GOVERNANCE

to fit your organization's compliance requirements.

It's important to understand that subscription policies are not relevant to Snowflake data shares, because the act of sharing the data is the subscription policy. Data policies can be enforced on the consuming account from the producer account on a share following these instructions.

Required Permission: Immuta: USER_ADMIN

To register the Snowflake data consumer in Immuta,

.

to match the account ID for the data consumer. This value is the output on the data consumer side when SELECT CURRENT_ACCOUNT() is run in Snowflake.

for your organization's policies.

Required Permission: Snowflake ACCOUNTADMIN

To share the policy-protected data source,

of the Snowflake table that has been registered in Immuta.

Grant reference usage on the Immuta database to the share you created:

Replace the content in angle brackets above with the name of your Immuta database and Snowflake data share.

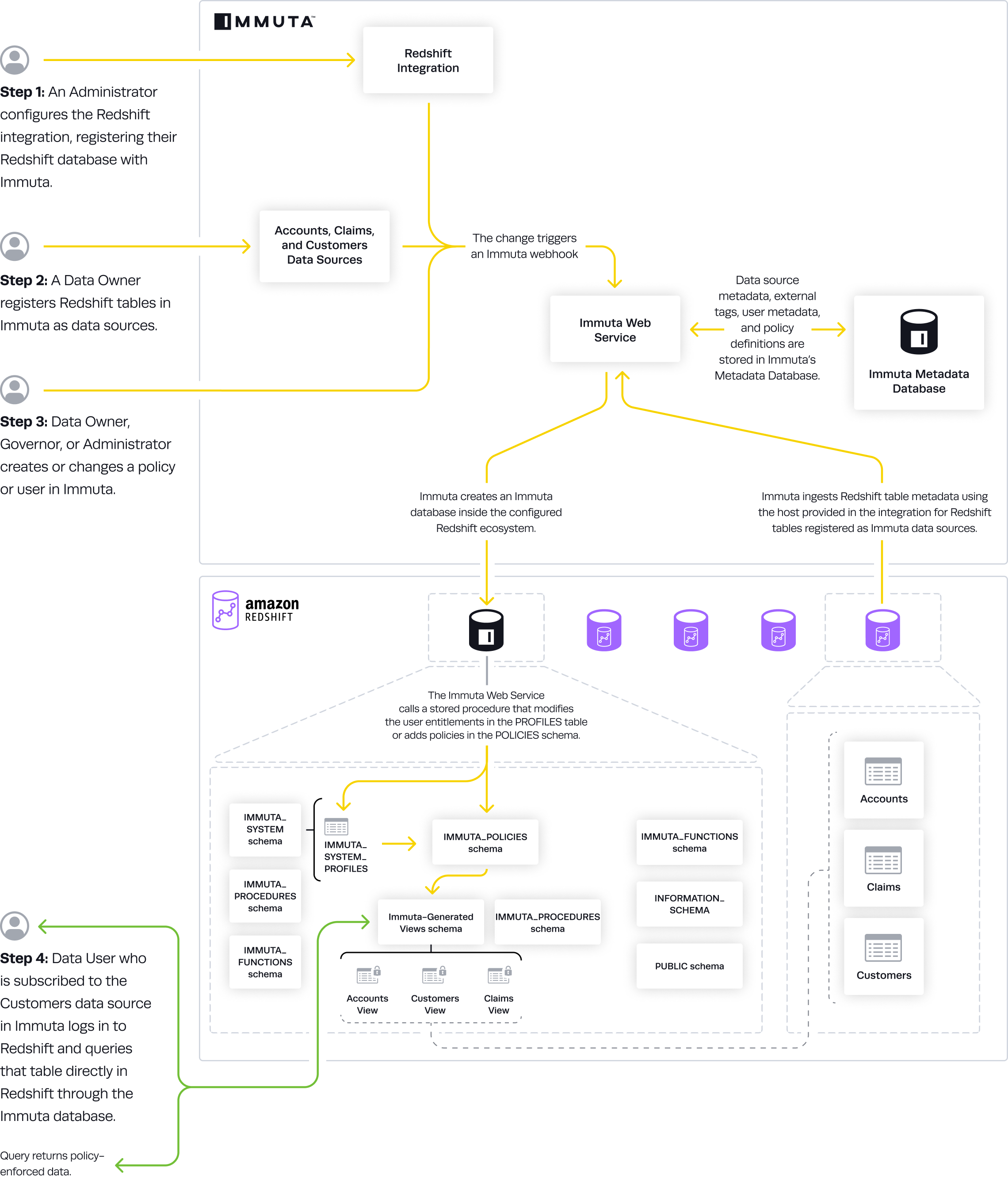

In this integration, Immuta generates policy-enforced views in your configured Redshift schema for tables registered as Immuta data sources.

This guide outlines how to integrate Redshift with Immuta.

: Configure the integration in Immuta.

: Configure Redshift Spectrum in Immuta.

: This guide describes the design and components of the integration.

: This guide describes the prerequisites, supported features, and limitations of the integration.

This integration allows you to manage and access data in your Databricks account across all of your workspaces. With Immuta’s Databricks Unity Catalog integration, you can write your policies in Immuta and have them enforced automatically by Databricks across data in your Unity Catalog metastore.

This getting started guide outlines how to integrate Databricks Unity Catalog with Immuta.

: Migrate from the legacy Databricks Spark integrations to the Databricks Unity Catalog integration.

: This guide describes the design and components of the integration.

Immuta integrates with your data platforms so you can register your data and effectively manage access controls on that data.

This section includes concept, reference, and how-to guides for registering and managing data.

This section includes reference and how-to guides for configuring Immuta in order to manage data through a single connection between Immuta and your data platform.

This section covers concepts related to registering your data objects in Immuta as data sources.

A data source is how data owners expose their data across their organization to other Immuta users. Throughout this process, the data is not copied. Instead, Immuta uses metadata from the data source to determine how to expose the data. An Immuta data source is a virtual representation of data that exists in a remote data platform.

This section includes reference and how-to guides for registering and managing data sources.

This reference guide describes Immuta data sources and their major components.

These how-to guides illustrate how to register data in Immuta.

The guides in this section illustrate how to manage and edit data sources and data dictionaries.

The reference and how-to guides in this section describe schema monitoring and illustrate how to configure it for your integration.

This upgrade step is necessary if you meet both of the following criteria:

You have the Snowflake low row access policy mode enabled in private preview.

You have user impersonation enabled.

If you do not meet this criteria, follow the instructions on the .

To upgrade to the generally available version of the feature, on the app settings page and then re-enable it.

When a data source is exposed, policies are dynamically enforced on the data, appropriately redacting and masking information depending on the attributes or groups of the user accessing the data. Once the data source is exposed and subscribed to, the data can be accessed in a consistent manner, allowing reproducibility and collaboration.

This section includes how-to guides for registering data sources in Immuta:

This page describes the Azure Synapse Analytics integration, through which Immuta applies policies directly in Azure Synapse Analytics. For a tutorial on configuring Azure Synapse Analytics see the .

The Azure Synapse Analytics is a policy push integration that allows Immuta to apply policies directly in Azure Synapse Analytics Dedicated SQL pools without the need for users to go through a proxy. Instead, users can work within their existing Synapse Studio and have per-user policies dynamically applied at query time.

The how-to guides linked on this page illustrate how to integrate Databricks Spark with Immuta.

Requirements

If Databricks Unity Catalog is enabled in a Databricks workspace, you must use an when you set up the Databricks Spark integration to create an Immuta-enabled cluster.

If Databricks Unity Catalog is not enabled in your Databricks workspace, you must disable Unity Catalog in your Immuta tenant before proceeding with your configuration of Databricks Spark:

This page outlines how to enable access to DBFS in Databricks for non-sensitive data. Databricks administrators should place the desired configuration in the Spark environment variables.

This Databricks feature mounts DBFS to the local cluster filesystem at /dbfs. Although disabled when using process isolation, this feature can safely be enabled if raw, unfiltered data is not stored in DBFS and all users on the cluster are authorized to see each other’s files. When enabled, the entirety of DBFS essentially becomes a scratch path where users can read and write files in /dfbs/path/to/my/file

In the context of the Databricks Spark integration, Immuta uses the term ephemeral to describe data sources where the associated compute resources can vary over time. This means that the compute bound to these data sources is not fixed and can change. All Databricks data sources in Immuta are ephemeral.

Ephemeral overrides are specific to each data source and user. They effectively bind cluster compute resources to a data source for a given user. Immuta uses these overrides to determine which cluster compute to use when connecting to Databricks for various maintenance operations.

The operations that use the ephemeral overrides include

Visibility checks on the data source for a particular user. These checks assess how to apply row-level policies for specific users.

Delta Lake API reference guide

When using Delta Lake, the API does not go through the normal Spark execution path. This means that Immuta's Spark extensions do not provide protection for the API. To solve this issue and ensure that Immuta has control over what a user can access, the Delta Lake API is blocked.

Spark SQL can be used instead to give the same functionality with all of Immuta's data protections.

Below is a table of the Delta Lake API with the Spark SQL that may be used instead.

The how-to guides linked on this page illustrate how to integrate Databricks Unity Catalog with Immuta. See the for information about the Databricks Unity Catalog integration.

Requirements:

Unity Catalog and attached to a Databricks workspace. Immuta supports configuring a single metastore for each configured integration, and that metastore may be attached to multiple Databricks workspaces.

Unity Catalog enabled on your Databricks cluster or SQL warehouse. All SQL warehouses have Unity Catalog enabled if your workspace is attached to a Unity Catalog metastore.

The how-to guides linked on this page illustrate how to integrate Snowflake with Immuta. See the for information about the Snowflake integration.

Requirements

Snowflake enterprise edition

Access to a Snowflake account that can create a Snowflake user

Snowflake with low row access policy mode enabled will soon be required

Support for disabling this feature has been deprecated. You must have Snowflake low row access policy mode and enabled for your integration to continue working. Furthermore, (which require table grants to be disabled) will be unavailable. See the for EOL dates.

The Snowflake low row access policy mode improves query performance in Immuta's Snowflake integration by decreasing the number of Immuta creates and by using table grants to manage user access.

Immuta is compatible with . Using both Immuta and Snowflake, organizations can share the policy-protected data of their Snowflake database with other Snowflake accounts with Immuta policies enforced in real time. This integration gives data consumers a live connection to the data and relieves data providers of the legal and technical burden of creating static data copies that leave their Snowflake environment.

Requirements:

Snowflake Enterprise Edition or higher

Immuta's

Connections allow you to register your data objects in a technology through a single connection, making data registration more scalable for your organization. Instead of registering schema and databases individually, you can register them all at once and allow Immuta to monitor your data platform for changes so that data sources are added and removed automatically to reflect the state of data on your data platform.

Register a connection:

Connections are an improvement from the existing process for not only onboarding your data sources but also managing the integration. However, there are some differences between the two processes that should be noted and understood before you start with the upgrade.

API changes: See the for a complete breakdown of the APIs that will not work once you begin the upgrade. These changes will mostly affect users with automated API calls around schema monitoring and data source registration.

Automated data source names: Previously, you could name data sources manually. However, data sources from connections are automatically named using the information (database, schema, table) and casing from your data platform. For example, on Snowflake this will typically mean that my_table

If you have Snowflake low row access policy mode enabled in private preview and have impersonation enabled, see these . Otherwise, query performance will be negatively affected.

Click the App Settings icon in the sidebar and scroll to the Global Integration Settings section.

Select Apply Policies to push the cluster policies and init script again.

Click Save and Confirm to deploy your changes.

Manually update cluster policies

Download the init script and the new cluster policies to your local computer.

Click Save and Confirm to save your changes in Immuta.

Log in to your Databricks workspace with your administrator account to set up cluster policies.

Get the path you will upload the init script (immuta_cluster_init_script_proxy.sh) to by opening one of the cluster policy .json files and looking for the defaultValue of the field init_scripts.0.dbfs.destination. This should be a DBFS path in the form of dbfs:/immuta-plugin/hostname/immuta_cluster_init_script_proxy.sh.

Click Data in the left pane to upload your init script to DBFS to the path you found above.

To find your existing cluster policies you need to update, click Compute in the left pane and select the Cluster policies tab.

Edit each of these cluster policies that were configured before and overwrite the contents of the JSON with the new cluster policy JSON you downloaded.

Stats collection triggered by a specific user.

Validating a custom WHERE clause policy against a data source. When owners or governors create custom WHERE clause policies, Immuta uses compute resources to validate the SQL in the policy. In this case, the ephemeral overrides for the user writing the policy are used to contact a cluster for SQL validation.

High cardinality column detection. Certain advanced policy types (e.g., minimization) in Immuta require a high cardinality column, and that column is computed on data source creation. It can be recomputed on demand and, if so, will use the ephemeral overrides for the user requesting computation.

An ephemeral override request can be triggered when a user queries the securable corresponding to a data source in a Databricks cluster with the Spark plug-in configured. The actual triggering of this request depends on the configuration settings.

Ephemeral overrides can also be set for a data source in the Immuta UI by navigating to the data source page, clicking on the data source actions button, and selecting Ephemeral overrides from the dropdown menu.

Ephemeral override requests made from a cluster for data sources and users where ephemeral overrides were set in the UI will not be successful.

If ephemeral overrides are never set (either through the user interface or the cluster configuration), the system will continue to use the connection details directly associated with the data source, which are set during data source registration.

Ephemeral overrides can be problematic in environments that have a dedicated cluster to handle maintenance activities, since ephemeral overrides can cause these operations to execute on a different cluster than the dedicated one.

To reduce the risk that a user has overrides set to a cluster (or multiple clusters) that aren't currently up, complete one of the following actions:

Direct all clusters' HTTP paths for overrides to a cluster dedicated for metadata queries using the IMMUTA_EPHEMERAL_HOST_OVERRIDE_HTTPPATH Spark environment variable.

Disable ephemeral overrides completely by setting the IMMTUA_EPHEMERAL_HOST_OVERRIDE Spark environment variable to false.

Ephemeral overrides best practices

Disable ephemeral overrides for clusters when using multiple workspaces and dedicate a single cluster to serve queries from Immuta in a single workspace.

If you use multiple E2 workspaces without disabling ephemeral overrides, avoid applying the where user row-level policy to data sources.

Register a Snowflake connection: Register a connection with a Snowflake account and register the data objects within it.

Register a Databricks Unity Catalog connection: Register a connection with a Databricks Unity Catalog metastore and register the data objects within it.

Register a Trino connection: Register a connection with a Trino or Starburst cluster and register the data objects within it.

Manage a connection:

Manage connection settings: Change the object sync settings and manage user permissions for the connection.

Run object sync on a connection or object: Trigger object sync manually for the entire connection or a single object to sync your remote data platform objects with Immuta.

Use the connection upgrade manager: Complete the upgrade path from the existing integrations and data sources to a connection.

Connections reference guide: This reference guide discusses the major concepts, design, and settings of connections.

Upgrading to connections: This reference guide discusses the differences when upgrading from the existing integrations and data sources to a connection.

Click Data and select the Connections tab in the navigation menu.

Click the more actions menu for the connection you want and select Run Object Sync.

Opt to click the checkbox to Also scan all disabled data objects.

Click Run Object Sync.

Click Data and select the Connections tab in the navigation menu.

Select the connection.

Click the more actions menu in the Action column for the database you want to sync and select Run Object Sync.

Opt to click the checkbox to Also scan all disabled data objects.

Click Run Object Sync.

Click Data and select the Connections tab in the navigation menu.

Select the connection.

Select the database.

Click the more actions menu in the Action column for the schema you want to sync and select Run Object Sync.

Opt to click the checkbox to Also scan all disabled data objects.

Click Run Object Sync.

You can run object sync from the data source health check or from the connection,

Click Data and select the Connections tab in the navigation menu.

Select the connection.

Select the database.

Select the schema

Click the more actions menu in the Action column for the data object you want to sync and select Run Object Sync.

Opt to click the checkbox to Also scan all disabled data objects.

Click Run Object Sync

Run R and Scala spark-submit jobs on Databricks: Run R and Scala spark-submit jobs on your Databricks cluster.

DBFS access: Access DBFS in Databricks for non-sensitive data.

Troubleshooting: Resolve errors in the Databricks Spark configuration.

Accessing data: This guide provides an overview of how Databricks users access data registered in Immuta.

Existing Snowflake integration (manual setup): Immuta will display a link to a migration script you must run in Snowflake and a link to a rollback script for use in the event of a failed migration. Important: Execute the migration script in Snowflake before clicking Save on the app settings page.

This method requires that the data consumer account is registered as an Immuta user with the Snowflake user name equal to the consuming account.

At that point, the user that represents the account being shared with can have the appropriate attributes and groups assigned to them, relevant to the data policies that need to be enforced. Once that user has access to the share in the consuming account (not managed by Immuta), they can query the share with the data policies from the producer account enforced because Immuta is treating that account as if they are a single user in Immuta.

For a tutorial on this workflow, see the Using Snowflake Data Sharing page.

Using Immuta with Snowflake Data Sharing allows the sharer to

Only need limited knowledge of the context or goals of the existing policies in place: Because the sharer is not editing or creating policies to share their data, they only need a limited knowledge of how the policies work. Their main responsibility is making sure they properly represent the attributes of the data consumer (the account being shared to).

Leave policies untouched.

My Connection.MY_DATABASE.MY_SCHEMA.MY_TABLEIf you are leveraging Immuta APIs, you may need to adjust code to allow for the new data source names.

Schema projects phased out: With integrations, many settings and the connection info for data sources were controlled in the schema project. This functionality is no longer needed with connections and now you can control connection details in a central spot.

New hierarchy display: With integrations, tables were brought in as data sources and presented as a flat list on the data source list page. With connections, databases and schemas are displayed as objects too.

Change from schema monitoring to object sync: Object metadata synchronization between Immuta and your data platform is no longer optional but always required:

If schema monitoring is off before the upgrade: Once the connection is registered, everything the system user can see will be pulled into Immuta and, if it didn't already exist in Immuta, it will be a disabled object. These disabled objects exist so you can see them, but policy is not protecting the objects, and they will not appear as data sources.

If schema monitoring is on before the upgrade: Once the connection is registered, everything the system user can see will be pulled into Immuta. If it already existed in Immuta, it will be an enabled object and continue to appear as data source.

Enabling a connection will enable all databases, schemas, and tables in the hierarchy: If the connection is disabled after completing your upgrade to connections, only enable the host if you want to enable all databases, schemas, and tables within it.

Enabling a table that is ordinarily disabled will elevate it to a data source. Immuta will then apply data and subscription policies on that data source.

Click the Enable Snowflake Low Row Access Policy Mode checkbox to enable the feature.

Confirm to allow Immuta to automatically disable impersonation for the Snowflake integration. If you do not confirm, you will not be able to enable Snowflake low row access policy mode.

Click Save.

If you already have a Snowflake integration configured, you don't need to reconfigure your integration. Your Snowflake policies automatically refresh when you enable Snowflake low row access policy mode.

Configure your Snowflake integration. Note that you will not be able to enable project workspaces or user impersonation with Snowflake low row access policy mode enabled.

Click Save and Confirm your changes.

Starburst (Trino) integration configuration guide: Configure the integration in Immuta.

Map read and write access policies to Starburst (Trino) privileges: Configure how read and write access subscription policies translate to Starburst (Trino) privileges and apply to Starburst (Trino) data sources.

Trino connection reference guide: This guide describes the design and components of the integration when registered with a connection.

Starburst (Trino) integration reference guide: This guide describes the design and components of the integration.

DBFS FUSE mount limitation: This feature cannot be used in environments with E2 Private Link enabled.

For example,

In Python,

Note: This solution also works in R and Scala.

To enable the DBFS FUSE mount, set this configuration in the Spark environment variables: IMMUTA_SPARK_DATABRICKS_DBFS_MOUNT_ENABLED=true.

Mounting a bucket

Users can mount additional buckets to DBFS that can also be accessed using the FUSE mount.

Mounting a bucket is a one-time action, and the mount will be available to all clusters in the workspace from that point on.

Mounting must be performed from a non-Immuta cluster.

Scratch paths will work when performing arbitrary remote filesystem operations with fs magic or Scala dbutils.fs functions. For example,

To support %fs magic and Scala DBUtils with scratch paths, configure

To use dbutils in Python, set this configuration: immuta.spark.databricks.py4j.strict.enabled=false.

This section illustrates the workflow for getting a file from a remote scratch path, editing it locally with Python, and writing it back to a remote scratch path.

Get the file from remote storage:

Make a copy if you want to explicitly edit localScratchFile, as it will be read-only and owned by root:

Write the new file back to remote storage:

GRANT REFERENCE_USAGE ON DATABASE "<Immuta database of the provider account>" TO SHARE "<DATA_SHARE>";dbutils.fs.cp(s3ScratchFile, "file://{}".format(localScratchFile))shutil.copy(localScratchFile, localScratchFileCopy)

with open(localScratchFileCopy, "a") as f:

f.write("Some appended file content")dbutils.fs.cp("file://{}".format(localScratchFileCopy), s3ScratchFile)%sh echo "I'm creating a new file in DBFS" > /dbfs/my/newfile.txt%python

with open("/dbfs/my/newfile.txt", "w") as f:

f.write("I'm creating a new file in DBFS")%fs put -f s3://my-bucket/my/scratch/path/mynewfile.txt "I'm creating a new file in S3"

%scala dbutils.fs.put("s3://my-bucket/my/scratch/path/mynewfile.txt", "I'm creating a new file in S3")<property>

<name>immuta.spark.databricks.scratch.paths</name>

<value>s3://my-bucket/my/scratch/path</value>

</property>%python

import os

import shutil

s3ScratchFile = "s3://some-bucket/path/to/scratch/file"

localScratchDir = os.environ.get("IMMUTA_LOCAL_SCRATCH_DIR")

localScratchFile = "{}/myfile.txt".format(localScratchDir)

localScratchFileCopy = "{}/myfile_copy.txt".format(localScratchDir)Opt to check the Enable Impersonation box and customize the Impersonation Role name as needed. This will allow users to natively impersonate another user. Once you finish configuring the integration, you can grant the IMPERSONATE_USER permission to Immuta users. See the Managing users and permissions guide for instructions.

Opt to update the User Profile Delimiters. This will be necessary if any of the provided symbols are used in user profile information.

Username and Password: Enter the username and password in the Immuta System Account Credentials section. The username and password provided must be the credentials that were set in the bootstrap master script when you created the user.

Entra ID OAuth Client Secret: The values below can be found on the overview page of the application you created in Microsoft Entra ID. Before you enter this information, ensure you have completed the prerequisites for OAuth authentication listed above.

Display Name: This must match the name of the OAuth application you registered.

Tenant Id

Client Id

Client Secret: Enter the Value of the secret, not the secret ID.

Use the authentication method and credentials you provided when initially configuring the integration.

Click Save.

Click Save.

In this example, you would add the following Spark environment variable:

IMMUTA_SPARK_DATABRICKS_TRUSTED_LIB_URIS=maven:/com.github.immuta.hadoop.immuta-spark-third-party-maven-lib-test:2020-11-17-144644This integration works on a per-Dedicated-SQL-pool basis: all of Immuta's policy definitions and user entitlements data need to be in the same pool as the target data sources because Dedicated SQL pools do not support cross-database joins. Immuta creates schemas inside the configured Dedicated SQL pool that contain policy-enforced views that users query.

When the integration is configured, the Application Admin specifies the

Immuta database: This is the pre-existing database Immuta uses. Immuta will create views from the tables contained in this database, and all schemas and views created by Immuta will exist in this database, such as the schemas immuta_system, immuta_functions, and the immuta_procedures that contain the tables, views, UDFs, and stored procedures that support the integration.

Immuta schema: The schema that Immuta manages. All views generated by Immuta for tables registered as data sources will be created in this schema.

User profile delimiters: Since Azure Synapse Analytics dedicated SQL pools do not support array or hash objects, certain user access information is stored as delimited strings; the Application Admin can modify those delimiters to ensure they do not conflict with possible characters in strings.

For a tutorial on configuring the integration see the Azure Synapse Integration page.

Synapse data sources are represented as views and are under one schema instead of a database, so their view names are a combination of their schema and table name, separated by an underscore.

For example, with a configuration that uses IMMUTA as the schema in the database dedicated_pool, the view name for the data source dedicated_pool.tpc.case would be dedicated_pool.IMMUTA.tpc_case.

You can see the view information on the data source details page under Connection Information.

This integration uses webhooks to keep views up-to-date with the corresponding Immuta data sources. When a data source or policy is created, updated, or disabled, a webhook is called that creates, modifies, or deletes the dynamic view in the Immuta schema. Note that only standard views are available because Azure Synapse Analytics Dedicated SQL pools do not support secure views.

The status of the integration is visible on the integrations tab of the Immuta application settings page. If errors occur in the integration, a banner will appear in the Immuta UI with guidance for remediating the error.

The definitions for each status and the state of configured data platform integrations is available in the response schema of the integrations API. However, the UI consolidates these error statuses and provides detail in the error messages.

An Immuta Application Administrator configures the Azure Synapse Analytics integration, registering their initial Synapse Dedicated SQL pool with Immuta.

Immuta creates Immuta schemas inside the configured Synapse Dedicated SQL pool.

A Data Owner registers Azure Synapse Analytics tables in Immuta as data sources. A Data Owner, Data Governor, or Administrator creates or changes a policy or user in Immuta.

Data source metadata, tags, user metadata, and policy definitions are stored in Immuta's Metadata Database.

The Immuta Web Service calls a stored procedure that modifies the user entitlements or policies and updates data source view definitions as necessary.

An Azure Synapse Analytics user who is subscribed to the data source in Immuta in Azure Synapse Analytics and sees policy-enforced data.

Navigate to the App Settings page and click Integration Settings.

Uncheck the Enable Unity Catalog checkbox.

Click Save.

Connect your technology

These guides provide instructions for getting your data set up in Immuta.

: Use domains to segment your data and assign responsibilities to the appropriate team members. These domains will then be used in policies, audit, and identification.

Register your users

These guides provide instructions on setting up your users in Immuta.

: Connect the IAM your organization already uses and allow Immuta to register your users for you.

Add data metadata

These guides provide instructions on getting your data metadata set up in Immuta for use in policies.

: Connect the external catalog your organization already uses and allow Immuta to continually sync your tags with your data sources for you.

Protect and monitor data access

These guides provide instructions on authoring policies and auditing data access.

: Once you add your data metadata to Immuta, you can immediately create policies that utilize your tags and apply to your tables. Subscription policies can be created to dictate access to data sources.

DeltaTable.convertToDelta

CONVERT TO DELTA parquet./path/to/parquet/

DeltaTable.delete

DELETE FROM [table_identifier delta./path/to/delta/] WHERE condition

DeltaTable.generate

GENERATE symlink_format_manifest FOR TABLE [table_identifier delta./path/to/delta]

DeltaTable.history

DESCRIBE HISTORY [table_identifier delta./path/to/delta] (LIMIT x)

See here for a complete list of the Delta SQL Commands.

When a table is created in a project workspace, you can merge a different Immuta data source from that workspace into that table you created.

Create a temporary view of the Immuta data source you want to merge into that table.

Use that temporary view as the data source you add to the project workspace.

Run the following command:

Connect your technology

These guides provide instructions on getting your data set up in Immuta.

Register your Databricks Unity Catalog connection: Using a single setup process, connect Databricks Unity Catalog to Immuta. This will register your data objects into Immuta and allow you to start dictating access through global policies.

: Use domains to segment your data and assign responsibilities to the appropriate team members. These domains will then be used in policies, audit, and identification.

Connections are generally available on all 2025.1+ tenants. If you do not have connections enabled on your tenant, and using the legacy workflow.

Register your users

These guides provide instructions on getting your users set up in Immuta.

: Bring the IAM your organization already uses and allow Immuta to register your users for you.

Add data metadata

These guides provide instructions on getting your data metadata set up in Immuta.

: Bring the external catalog your organization already uses and allow Immuta to continually sync your tags with your data sources for you.

Start using the Governance app

These guides provide instructions on using the Governance app for the first time.

: Once you add your data metadata to Immuta, you can immediately create policies that utilize your tags and apply to your tables. Subscription policies can be created to dictate access to data sources.

Connect your technology

These guides provide instructions on getting your data set up in Immuta.

Register your Snowflake connection: Using a single setup process, connect Snowflake to Immuta. This will register your data objects into Immuta and allow you to start dictating access through global policies.

Organize your data sources into domains and assign domain permissions to accountable teams: Use domains to segment your data and assign responsibilities to the appropriate team members. These domains will then be used in policies, audit, and identification.

Connections are generally available on all 2025.1+ tenants. If you do not have connections enabled on your tenant, and using the legacy workflow.

Register your users

These guides provide instructions on getting your users set up in Immuta.

Connect an IAM: Bring the IAM your organization already uses and allow Immuta to register your users for you.

Map external user IDs from Snowflake to Immuta: Ensure the user IDs in Immuta, Snowflake, and your IAM are aligned so that the right policies impact the right users.

Add data metadata

These guides provide instructions on getting your data metadata set up in Immuta.

Connect an external catalog: Bring the external catalog your organization already uses and allow Immuta to continually sync your tags with your data sources for you.

: Identification allows you to automate data tagging using identifiers that detect certain data patterns.

Start using the Governance app

These guides provide instructions on using the Governance app for the first time.

Author a global subscription policy: Once you add your data metadata to Immuta, you can immediately create policies that utilize your tags and apply to your tables. Subscription policies can be created to dictate access to data sources.

: Data metadata can also be used to create data policies that apply to data sources as they are registered in Immuta. Data policies dictate what data a user can see once they are granted access to a data source. Using catalog and identification applied tags you can create proactive policies, knowing that they will apply to data sources as they are added to Immuta with the automated tagging.

: Once you have your data sources and users, and policies granting them access, you can set up audit export. This will export the audit logs from user queries, policy changes, and tagging updates.

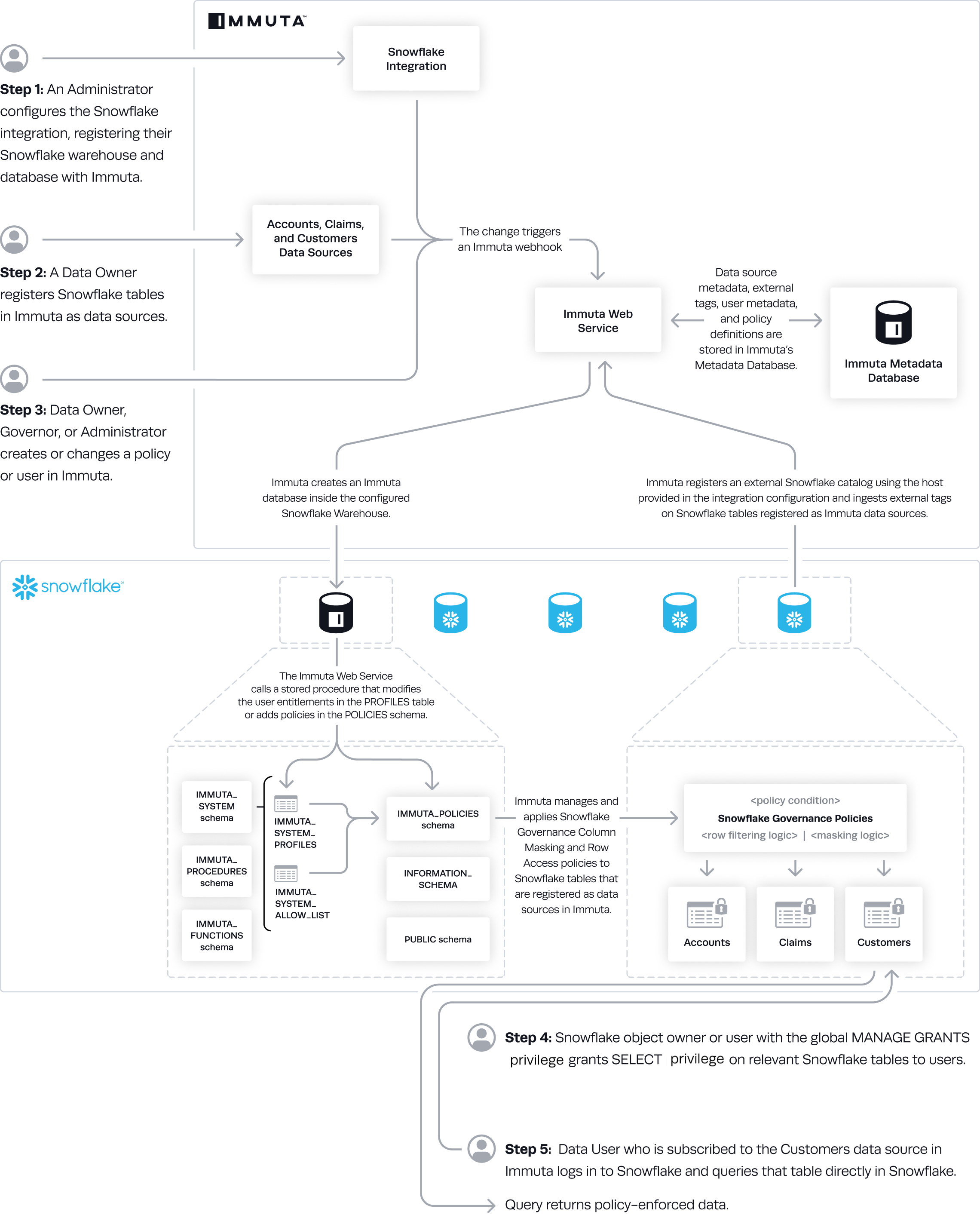

Immuta manages access to Snowflake tables by administering Snowflake row access policies and column masking policies on those tables, allowing users to query them directly in Snowflake while policies are enforced.

Without Snowflake low row access policy mode enabled, row access policies are created and administered by Immuta in the following scenarios:

Table grants are disabled and a subscription policy that does not automatically subscribe everyone to the data source is applied. Immuta administers Snowflake row access policies to filter out all the rows to restrict access to the entire table when the user doesn't have privileges to query it. However, if table grants are disabled and a subscription policy is applied that grants everyone access to the data source automatically, Immuta does not create a row access policy in Snowflake. See the subscription policies page for details about these policy types.

Purpose-based policy is applied to a data source. A row access policy filters out all the rows of the table if users aren't acting under the purpose specified in the policy when they query the table.

Row-level security policy is applied to a data source. A row access policy filters out rows querying users don't have access to.

is enabled. A row access policy is created for every Snowflake table registered in Immuta.

Snowflake low row access policy mode is enabled by default to reduce the number of row access policies Immuta creates and improve query performance. Snowflake low row access policy mode requires

user impersonation to be disabled. User impersonation diminishes the performance of interactive queries because of the number of row access policies Immuta creates when it's enabled.

Project-scoped purpose exceptions for Snowflake integrations allow you to apply purpose-based policies to Snowflake data sources in a project. As a result, users can only access that data when they are working within that specific project.

This feature allows masked columns to be joined across data sources that belong to the same project. When data sources do not belong to a project, Immuta uses a unique salt per data source for hashing to prevent masked values from being joined. (See the Why use masked joins? guide for an explanation of that behavior.) However, once you add Snowflake data sources to a project and enable masked joins, Immuta uses a consistent salt across all the data sources in that project to allow the join.

For more information about masked joins and enabling them for your project, see the Masked joins section of documentation.

Project workspaces are not compatible with this feature.

Impersonation is not supported when the Snowflake low row access policy mode is enabled.

Private preview: The Amazon S3 integration is available to select accounts. Contact your Immuta representative for details.

Navigate to the My Data Sources page in Immuta.

Click New Data Source.

Select the S3 tile in the data platform section.

Select your AWS Account/Region from the dropdown menu.

Opt to select a to which data sources will be assigned.

Opt to add default tags to the data sources.

Click Next.

The prefix field is populated with the base path. Add to this prefix to create a data source for a prefix, bucket, or object.

If the data source prefix ends in a wildcard (*), it protects all items starting with that prefix. For example, a base location of s3:// and a data source prefix surveys/2024* would protect paths like s3://surveys/2024-internal/research-dept.txt or s3://surveys/2024-customer/april/us.csv.

Click Add Prefix, and then click Next.

Verify that your prefixes are correct and click Complete Setup.

This page describes the Azure Synapse integration, configuration options, and features. See the Azure Synapse integration page for a tutorial on enabling the integration and these features through the app settings page.

A running dedicated SQL pool

The Azure Synapse Analytics integration supports the following authentication methods to configure the integration and create data sources:

Username and password: Immuta supports SQL authentication with username and password for Azure Synapse Analytics. See the for details.

OAuth authentication with Microsoft Entra ID: You can use this authentication method to register data sources or configure the Azure Synapse Analytics integration using the . To use this authentication method, OAuth must be set up via . See the for details about using OAuth authentication with Microsoft Entra ID.

Immuta cannot ingest tags from Synapse, but you can connect any of these to work with your integration.

Impersonation allows users to query data as another Immuta user in Azure Synapse Analytics. To enable user impersonation, see the .

A user can to a single Immuta tenant.

Immuta does not support the following masking types in this integration because of limitations with dedicated SQL pools (linked below). Any column assigned one of these masking types will be masked to NULL:

Reversible Masking: Synapse UDFs currently only support SQL, but Immuta needs to execute code (such as JavaScript or Python) to support this masking feature. See the .

Format Preserving Masking: Synapse UDFs currently only support SQL, but Immuta needs to execute code (such as JavaScript or Python) to support this masking feature. See the .

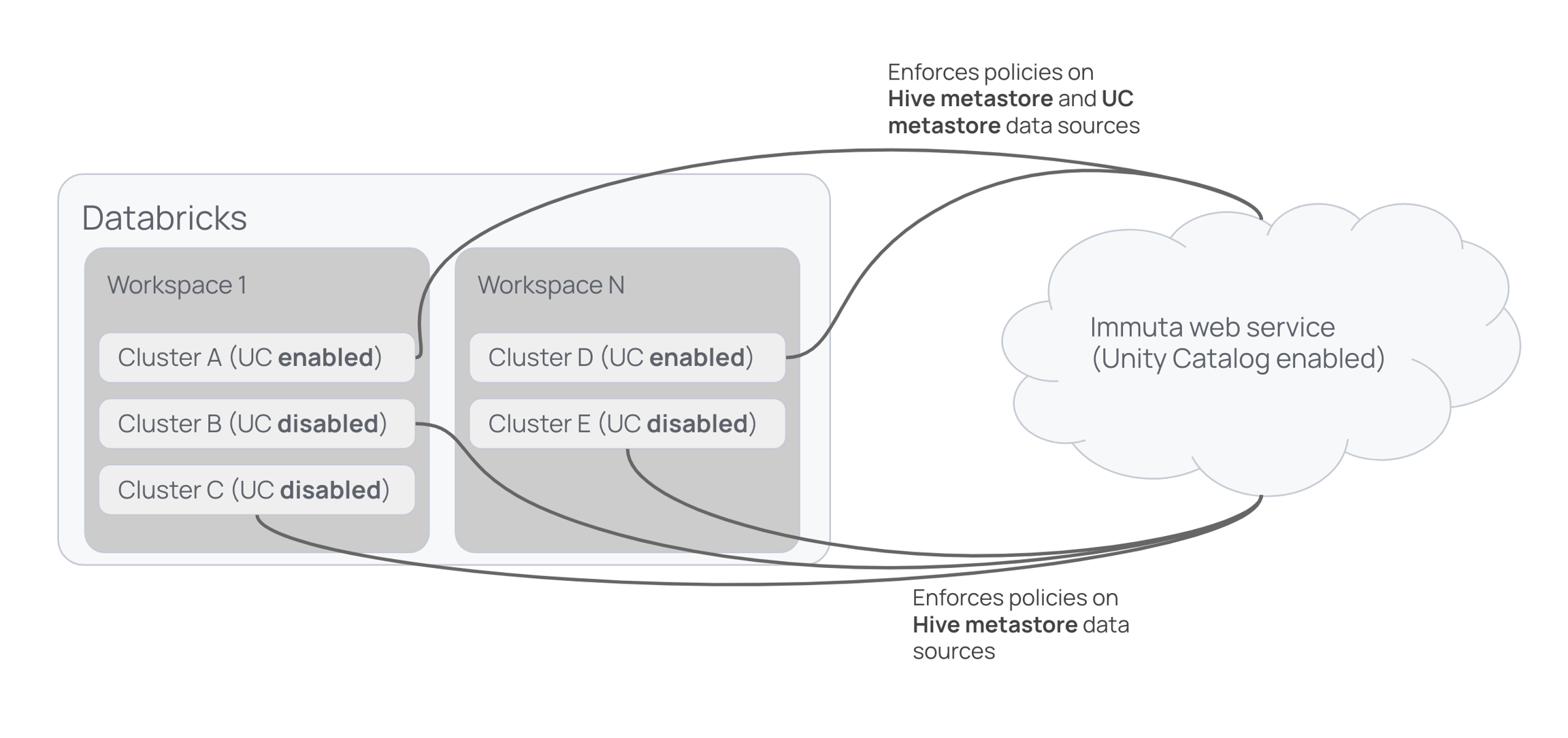

Immuta offers two integrations for Databricks:

Databricks Unity Catalog integration: This integration supports working with database objects registered in Unity Catalog.

Databricks Spark integration: This integration supports working with database objects registered in the legacy Hive metastore.

To determine which integration you should use, evaluate the following elements:

Cluster runtime

Databricks Runtime 11.3 and newer: See the list below to determine which integration is supported for your data's location.

Location of data: Where is your data?

Databricks metastore magic allows you to migrate your data from the Databricks legacy Hive metastore to the Unity Catalog metastore while protecting data and maintaining your current processes in a single Immuta instance.

Databricks metastore magic is for organizations who intend to use the , but must still protect tables in the Hive metastore until they can migrate all of their data to Unity Catalog.

Unity Catalog support is enabled in Immuta.

Databricks has two built-in metastores that contain metadata about your tables, views, and storage credentials:

Legacy Hive metastore: Created at the workspace level. This metastore contains metadata of the registered securables in that workspace available to query.

Unity Catalog metastore: Created at the account level and is attached to one or more Databricks workspaces. This metastore contains metadata of the registered securables available to query. All clusters on that workspace use the configured metastore and all workspaces that are configured to use a single metastore share those securables.

Databricks allows you to use the legacy Hive metastore and the Unity Catalog metastore simultaneously. However, Unity Catalog does not support controls on the Hive metastore, so you must attach a Unity Catalog metastore to your workspace and move existing databases and tables to the attached Unity Catalog metastore to use the governance capabilities of Unity Catalog.

Immuta's Databricks Spark integration and Unity Catalog integration enforce access controls on the Hive and Unity Catalog metastores, respectively. However, because these metastores have two distinct security models, users were discouraged from using both in a single Immuta instance before metastore magic; the Databricks Spark integration and Unity Catalog integration were unaware of each other, so using both concurrently caused undefined behavior.

Metastore magic reconciles the distinct security models of the legacy Hive metastore and the Unity Catalog metastore, allowing you to use multiple metastores (specifically, the Hive metastore or alongside Unity Catalog metastores) within a Databricks workspace and single Immuta instance and keep policies enforced on all your tables as you migrate them. The diagram below shows Immuta enforcing policies on registered tables across workspaces.

In clusters A and D, Immuta enforces policies on data sources in each workspace's Hive metastore and in the Unity Catalog metastore shared by those workspaces. In clusters B, C, and E (which don't have Unity Catalog enabled in Databricks), Immuta enforces policies on data sources in the Hive metastores for each workspace.

With metastore magic, the Databricks Spark integration enforces policies only on data in the Hive metastore, while the Unity Catalog integration enforces policies on tables in the Unity Catalog metastore.

To enforce plugin-based policies on Hive metastore tables and Unity Catalog native controls on Unity Catalog metastore tables, enable the and the Databricks Unity Catalog integration. Note that some Immuta policies are not supported in the Databricks Unity Catalog integration. See the for details.

Databricks SQL cannot run the Databricks Spark plugin to protect tables, so Hive metastore data sources will not be policy enforced in Databricks SQL.

To enforce policies on data sources in Databricks SQL, use to manually lock down Hive metastore data sources and the Databricks Unity Catalog integration to protect tables in the Unity Catalog metastore. Table access control is enabled by default on SQL warehouses, and any Databricks cluster without the Immuta plugin must have table access control enabled.

Once a Databricks securable is registered in Immuta as a data source and you are subscribed to that data source, you must access that data through SQL:

df = spark.sql("select * from immuta.table")import org.apache.spark.sql.SparkSession

%sql

select * from immuta.tablelibrary(SparkR)

df <- SparkR::sql(With R, you must load the SparkR library in a cell before accessing the data.

See the sections below for more guidance on accessing data using Delta Lake, direct file reads in Spark for file paths, and user impersonation.

When using Delta Lake, the API does not go through the normal Spark execution path. This means that Immuta's Spark extensions do not provide protection for the API. To solve this issue and ensure that Immuta has control over what a user can access, the Delta Lake API is blocked.

Spark SQL can be used instead to give the same functionality with all of Immuta's data protections. See the for a list of corresponding Spark SQL calls to use.

In addition to supporting direct file reads through workspace and scratch paths, Immuta allows direct file reads in Spark for file paths. As a result, users who prefer to interact with their data using file paths or who have existing workflows revolving around file paths can continue to use these workflows without rewriting those queries for Immuta.

When reading from a path in Spark, the Immuta Databricks Spark plugin queries the Immuta Web Service to find Databricks data sources for the current user that are backed by data from the specified path. If found, the query plan maps to the Immuta data source and follows existing code paths for policy enforcement.

Users can read data from individual parquet files in a sub-directory and partitioned data from a sub-directory (or by using a where predicate). Expand the blocks below to view examples of reading data using these methods.

To read from an individual file, load a partition file from a sub-directory:

To read partitioned data from a sub-directory, load a parquet partition from a sub-directory:

Alternatively, load a parquet partition using a where predicate:

Direct file reads for Immuta data sources only apply to data sources created from tables, not data sources created from views or queries.

If more than one data source has been created for a path, Immuta will use the first valid data source it finds. It is therefore not recommended to use this integration when more than one data source has been created for a path.

In Databricks, multiple input paths are supported as long as they belong to the same data source.

User impersonation allows Databricks users to query data as another Immuta user. To impersonate another user, see the .

Once your data platform integration is configured, Immuta periodically runs queries in that data platform to orchestrate policies or implement various features. Depending on your configuration, data platform cost model, and data platform query load, there may be incremental cost incurred when various Immuta features are enabled. The actions and features that trigger Immuta queries in your remote platform are listed below.

Configuring an integration or registering a connection: Immuta uses compute resources to set up the integration in the data platform. After the integration is configured, Immuta runs periodic validation queries to ensure the integration is still healthy. By default, this simple SELECT query is run once per hour to validate that the credentials, connection information, and network configuration are all functional.

Registering data objects and data sources: Immuta uses compute resources to register data objects and data sources. If schema monitoring is enabled when registering a data source, Immuta uses the compute warehouse that was employed during the initial data source registration to periodically monitor the schema for changes. To adjust the schedule of the schema monitoring job to reduce cost, see the . Additionally, these actions will use compute resources:

Data object or data source disabled

Data object or data source enabled

Data object or data source deleted

Policy applied to a data source: Immuta uses compute resources to orchestrate policies in the data platform. Consider registering data before creating global policies. Immuta does not apply a subscription policy on registered data unless an existing global policy applies to it, which allows Immuta to only pull metadata instead of also applying policies when data sources are created. Registering data before policies are created reduces the workload and the compute resources needed; Immuta will only perform a grant for the user who registered the data source. The following actions that trigger updates to policies will also use compute resources:

External user ID modifications

Group name changes

Scheduled audit ingest or manually-triggered audit ingest (clicking the Load Audit Events button): Generally, the data platform cost from enabling query audits is directly related to warehouse uptime governed by the audit frequency and average query compute cost. During query audit retrieval, Immuta runs standard query operations (e.g., SELECT) against the system views and does not use other data transfer methods that incur additional data egress costs. For example, during query audit retrieval for Snowflake, Immuta will use the Snowflake warehouse that was configured during integration registration to query the Snowflake system views. If this warehouse is stopped, Immuta will start it.

: To evaluate your data, Immuta generates a SQL query to execute in the remote technology. The query result contains the column name and the matching identifiers, and Immuta applies tags to the appropriate columns.

This evaluating and tagging process occurs when identification runs, which happens from the following events:

A new data source is created.

is enabled and a new data source is detected.

The how-to guides linked on this page illustrate how to integrate Starburst (Trino) with Immuta. See the reference guide for information about the Starburst (Trino) integration.

Connect your technology

These guides provide instructions on getting your data set up in Immuta.

: Using a single setup process, connect Trino to Immuta. This will register your data objects into Immuta and allow you to start dictating access through global policies.

: Use domains to segment your data and assign responsibilities to the appropriate team members. These domains will then be used in policies, audit, and identification.

Connections are generally available on all 2026.1+ tenants. If you do not have connections enabled on your tenant, reach out to your Immuta support professional.

Register your users

These guides provide instructions on getting your users set up in Immuta.

: Bring the IAM your organization already uses and allow Immuta to register your users for you.

Add data metadata

These guides provide instructions on getting your data metadata set up in Immuta.

: Bring the external catalog your organization already uses and allow Immuta to continually sync your tags with your data sources for you.

Start using the Governance app

These guides provide instructions on using the Governance app for the first time.

: Once you add your data metadata to Immuta, you can immediately create policies that utilize your tags and apply to your tables. Subscription policies can be created to dictate access to data sources.

The warehouse you select when configuring the Snowflake integration uses compute resources to set up the integration, register data sources, orchestrate policies, and run jobs like identification. Snowflake credit charges are based on the size of and amount of time the warehouse is active, not the number of queries run.

This document prescribes how and when to adjust the size and scale of clusters for your warehouse to manage workloads so that you can use Snowflake compute resources the most cost effectively.

In general, increase the size of and number of clusters for the warehouse to handle heavy workloads and multiple queries. Workloads are typically lighter after data sources are onboarded and policies are established in Immuta, so compute resources can be reduced after those workloads complete.

The Snowflake integration uses warehouse compute resources to sync policies created in Immuta to the Snowflake objects registered as data sources and, if enabled, to run and . Follow the guidelines below to adjust the warehouse size and scale according to your needs.

Increase the of and of clusters for the warehouse during large policy syncs, updates, and changes.

Enable to optimize resource use in Snowflake. In the Snowflake UI, the lowest auto suspend time setting is 5 minutes. However, through SQL query, you can set auto_suspend to 61 seconds (since the minimum uptime for a warehouse is 60 seconds). For example,

Identification uses compute resources for each table it runs on. Consider when registering data sources if you have an

For more details and guidance about warehouse sizing, see the .

Even after your integration is configured, data sources are registered, and policies are established, changes to those data sources or policies may initiate heavy workloads. Follow the guidelines below to adjust your warehouse size and scale according to your needs.

Review your to identify query performance and bottlenecks.

Check how many credits queries have consumed:

After reviewing query performance and cost, implement to adjust your warehouse.

Immuta manages access to Snowflake tables by administering Snowflake row access policies and column masking policies on those tables, allowing users to query tables directly in Snowflake while dynamic policies are enforced.

This getting started guide outlines how to integrate your Snowflake account with Immuta.

: Migrate to using Snowflake table grants in your Snowflake integration.

: Manage integration settings or delete your existing Snowflake integration.

: This reference guide describes the design and features of the Snowflake integration.

: Organizations can share the policy-protected data of their Snowflake database with other Snowflake accounts with Immuta policies enforced in real time. This guide describes the components of using Immuta with Snowflake data shares.

: Snowflake column lineage specifies how data flows from source tables or columns to the target tables in write operations. When Snowflake lineage tag propagation is enabled in Immuta, Immuta automatically applies tags added to a Snowflake table to its descendant data source columns in Immuta so you can build policies using those tags to restrict access to sensitive data.

The how-to guides linked on this page illustrate how to integrate Redshift with Immuta. See the reference guide for information about the Redshift integration.

Requirement: Redshift cluster with an RA3 node is required for the multi-database integration. For other instance types, you may configure a single-database integration using one of the Redshift Spectrum options.

Connect your technology

These guides provide instructions on getting your data set up in Immuta.

: Configure a Redshift integration with Immuta so that Immuta can create policy protected views for your users to query.

: This will register your data objects into Immuta and allow you to start dictating access through global policies.

s: Use domains to segment your data and assign responsibilities to the appropriate team members. These domains will then be used in policies and identification.

Register your users

These guides provide instructions on getting your users set up in Immuta.

: Bring the IAM your organization already uses and allow Immuta to register your users for you.

Add data metadata

These guides provide instructions on getting your data metadata set up in Immuta.

: Bring the external catalog your organization already uses and allow Immuta to continually sync your tags with your data sources for you.

Start using the Governance app

These guides provide instructions on using the Governance app for the first time.

: Once you add your data metadata to Immuta, you can immediately create policies that utilize your tags and apply to your tables. Subscription policies can be created to dictate access to data sources.

Private preview: This feature is available to select accounts. Contact your Immuta representative for details.

Snowflake Enterprise Edition

Snowflake X-Large or Large warehouse is strongly recommended

Make a request to the Immuta V2 API , as the Immuta UI does not support creating more than 1000 data sources. The following options must be specified in your request to ensure the maximum performance benefits of bulk data source creation. The Skip Stats Job tag is only required if you are using ; otherwise, Snowflake data sources automatically skip the stats job.

Specifying disableSensitiveDataDiscovery as true ensures that will not be applied when the new data sources are created in Immuta, regardless of how it is configured for the Immuta tenant. Disabling identification improves performance during data source creation.

Applying the Skip Stats Job tag using the tableTag value will ensure that some jobs that are not vital to data source creation are skipped, specifically the fingerprint and high cardinality check jobs.

When the Snowflake bulk data source creation feature is configured, the create data source endpoint operates asynchronously and responds immediately with a bulkId that can be used for monitoring progress.

To monitor the progress of the background jobs for the bulk data source creation, make the following request using the bulkId from the response of the previous step:

The response will contain a list of job states and the number of jobs currently in each state. If errors were encountered during processing, a list of errors will be included in the response:

With these recommended configurations, bulk creating 100,000 Snowflake data sources will take between six and seven hours for all associated jobs to complete.

Private preview: This feature is available to select accounts. Contact your Immuta representative to enable this feature.

Contact your Immuta representative to enable this feature in your Immuta tenant.

Navigate to the App Setting page and click the Integration tab.

Click +Add Integration and select Snowflake from the dropdown menu.

Complete the Host, Port, and Default Warehouse fields.

.